vRA 7.x focuses a lot on the user experience (UX), starting with one of the most critical — deploying the solution — then the second most critical, configuring it. Following through with the promise of a more streamlined deployment experience, vRA 7’s release made a significant UX leap with the debut of the wizard-driven and completely automated installation of the entire platform and automated initial configuration. And all of this in a significantly reduced deployment architecture.

The overall footprint of vRA has been drastically reduced. For a typical highly-available 6,x implementation, you would need at least 8 VA’s to cover just the core services (not including IaaS/windows components and the external App Services VA). In contrast, vRA 7’s deployment architecture brings that all down to a single pair of VA’s for core services. Once deployed, just 2 load-balanced VA’s will deliver vRA’s framework services, Identity Manager (SSO/vIDM), vPostgres DB, vRO, and RabbitMQ — all clustered and configurable behind a single load balance VIP and a single SSL cert. All that goodness, now down to 2 VA’s and all done automatically (!) during deployment.

While the IaaS (.net) components remain external, several services have moved to the VA(s). This will continue to be the case over time as more and more services make it over — eventually eliminating the Windows dependencies all together. So, for now — and in the spirit of UX — it’s all about making even those components a seamless part of the deployment.

High-Level Overview

- Production deployments of vRealize Automation (vRA) should be configured for high availability (HA)

- The vRA Deployment Wizard supports Minimal (staging / POC) and Enterprise (distributed / HA) for production-ready deployments, per the Reference Architecture

- Enterprise deployments require external load balancing services to support high availability and load distribution for several vRA services

- VMware validates (and documents) distributed deployments with F5 and NSX load balancers

- This document provides a sample configuration of a vRealize Automation 7.2 Distributed HA Deployment Architecture using VMware NSX for load balancing

Implementation Overview

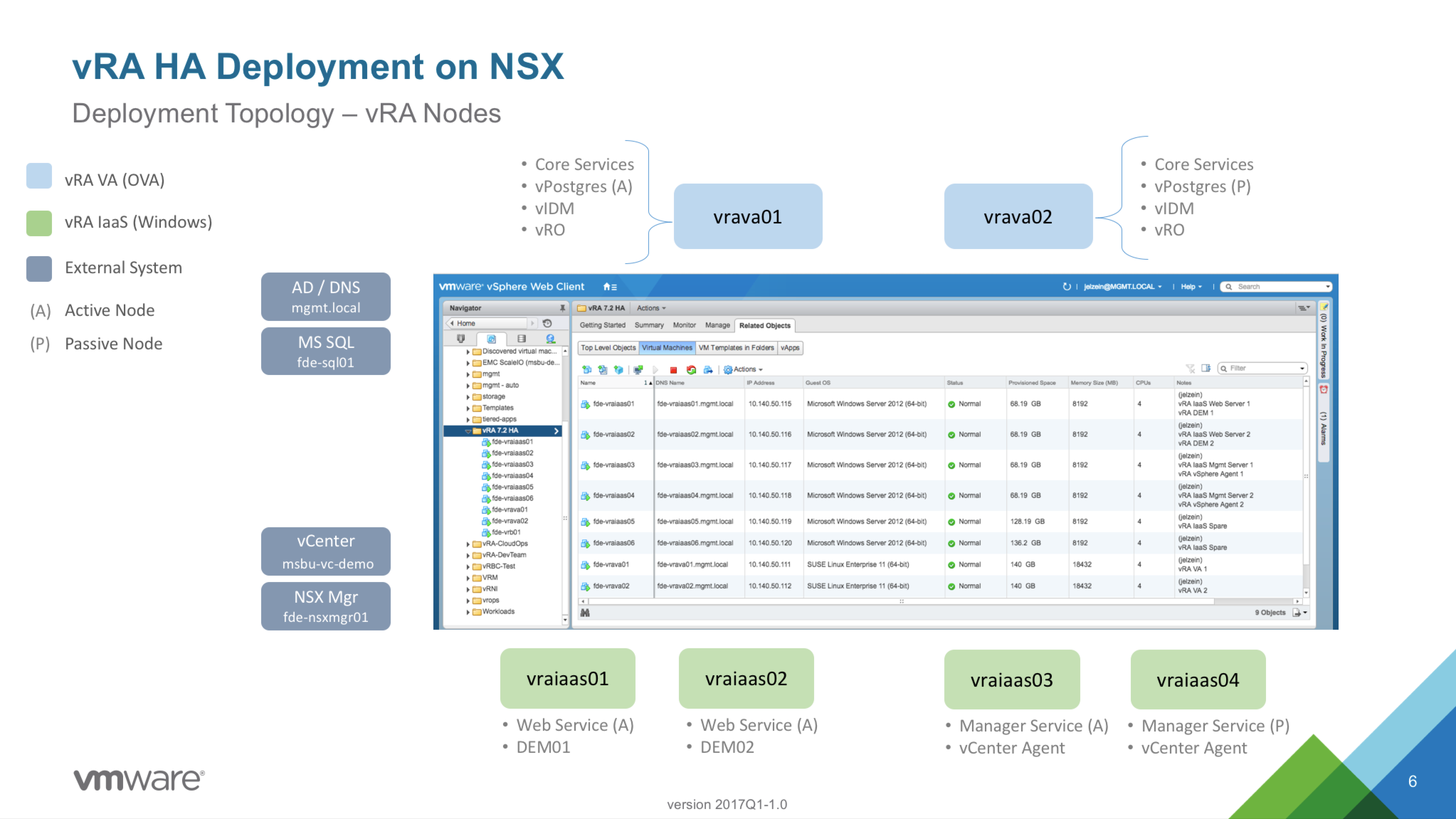

To set the stage, here’s a high-level view of the vRA nodes that will be deployed in this exercise. While a vRA POC can typically be done with 2 nodes (vRA VA + IaaS node on Windows), a distributed deployment can scale to anywhere from 4 (min) to a dozen or more components. This will depend on the expected scale, primarily driven by user access and concurrent operations. We will be deploying six (6) nodes in total – two (2) vRA appliances and four (4) Windows machines to support vRA’s IaaS services. This is equivalent to somewhere between a “small” and “medium” enterprise deployment. It’s a good balance of scale and supportability starting point.

A pair of VA’s will provide all the core vRA services. And in vRA 7.2, we now support (and recommend as a best practice) embedded vRO and vPostgres DB instances – these services will be automatically configured and clustered at deployment time. vIDM is also automatically configured across the two VA’s, but there will be a couple post-install config steps needed to provide highly-available access controls.

Rather than installing the required Distributed Execution Managers (DEM-O’s) and Endpoint Agents on dedicated hosts, I’m opting to collocate them on the IaaS servers – DEMs on the Web Servers and Agents on the Manager Servers. This is a supported configuration and works well until additional resources are needed. At that point moving these services to dedicated hosts is a straightforward process.

Virtual Machines

| Name | IP Address | Description |

|---|---|---|

| fde-vrava01.mgmt.local | 10.140.50.111 | vRealize Automation VA 01 |

| fde-vrava02.mgmt.local | 10.140.50.112 | vRealize Automation VA 02 |

| fde-vraiaas01.mgmt.local | 10.140.50.115 | vRA IaaS Services 1 (Web / DEM01) |

| fde-vraiaas02.mgmt.local | 10.140.50.116 | vRA IaaS Services 2 (Web / DEM02) |

| fde-vraiaas03.mgmt.local | 10.140.50.117 | vRA IaaS Services 3 (Mgr / Agent) |

| fde-vraiaas04.mgmt.local | 10.140.50.118 | vRA IaaS Services 4 (Mgr / Agent) |

| fde-vraiaas05.mgmt.local (optional) | 10.140.50.119 | vRA IaaS Services 5 (optional) |

| fde-vraiaas06.mgmt.local (optional) | 10.140.50.120 | vRA IaaS Services 6 (optional) |

| vrademo.mgmt.local | 10.140.50.110 | vRA VA VIP |

| vrademoweb.mgmt.local | 10.140.50.121 | vRA IAAS WEB VIP |

| vrademomgr.mgmt.local | 10.140.50.122 | vRA IAAS MGR VIP |

| fde-vrb01.mgmt.local | 10.140.50.123 | vRealize Business for Cloud (vRBC) VA |

| NSX Components | ||

| fde-nsxmgr01.mgmt.local | 10.140.50.131 | NSX Manager |

| fde-nsxesg01.mgmt.local | 10.140.50.132 | NSX Edge Services Gateway |

| fde-nsxesg02.mgmt.local | 10.140.50.133 | NSX Edge Services Gateway |

| fde-nsxdlr01.mgmt.local | 10.140.50.134 | NSX Distributed Logical Router |

| fde-nsxdlr02.mgmt.local | 10.140.50.135 | NSX Distributed Logical Router |

| fde-nsx-ctrl-01 | 10.140.50.140 | NSX Controller 01 |

| fde-nsx-ctrl-02 | 10.140.50.141 | NSX Controller 02 |

| fde-nsx-ctrl-02 | 10.140.50.142 | NSX Controller 03 |

| Shared Services | ||

| msbu-vc-demo.mgmt.local | 10.140.50.86 | vCenter Server, Demo |

| fde-sql01.mgmt.local | 10.140.50.125 | Dedicated SQL instance for vRA IaaS |

| mgmt-w-ad1.mgmt.local | 10.140.51.1 | Active Directory / DNS |

| mgmt-w-ad2.mgmt.local | 10.140.51.2 | Active Directory / DNS |

| fde-adfs01.mgmt.local | 10.140.50.124 | Windows 2008 R2 ADFS (mgmt.local) |

| Templates | ||

| t-win2k12-lab | Windows 2012 Template | |

| t-centos7-lab | CentOS 7 x64 Template | |

| t-ubuntu-14-04-3 | Ubuntu 14.04.3 x64 Template | |

| t-photon-1.0-ga | PhotonOS 1.0 Template | |

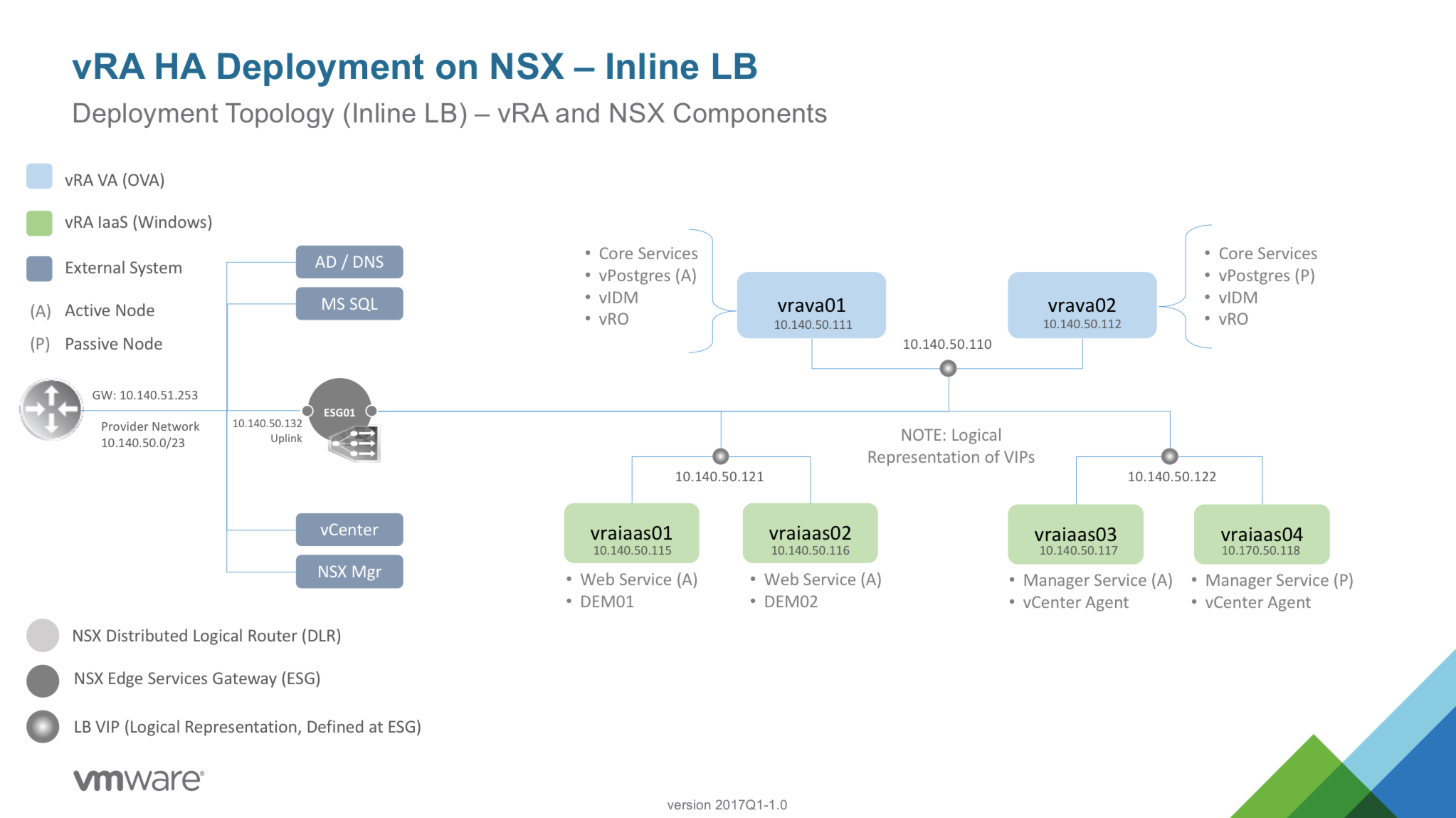

High-Level Deployment Architecture

vRA HA Deployment on NSX using Inline Load Balancing |

|

|---|---|

|

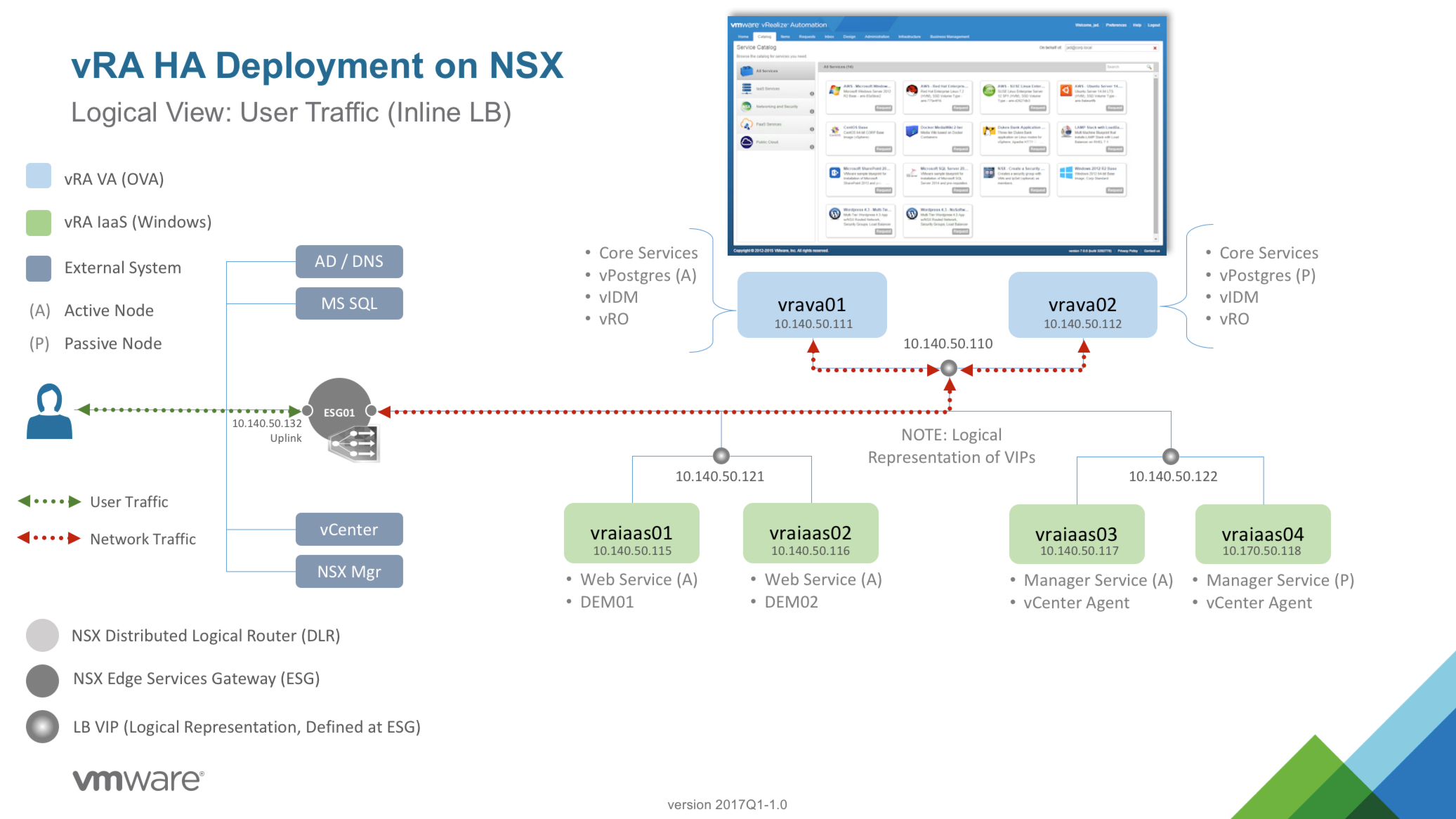

User Session Traffic, Inline Load Balancing |

|

|---|---|

|

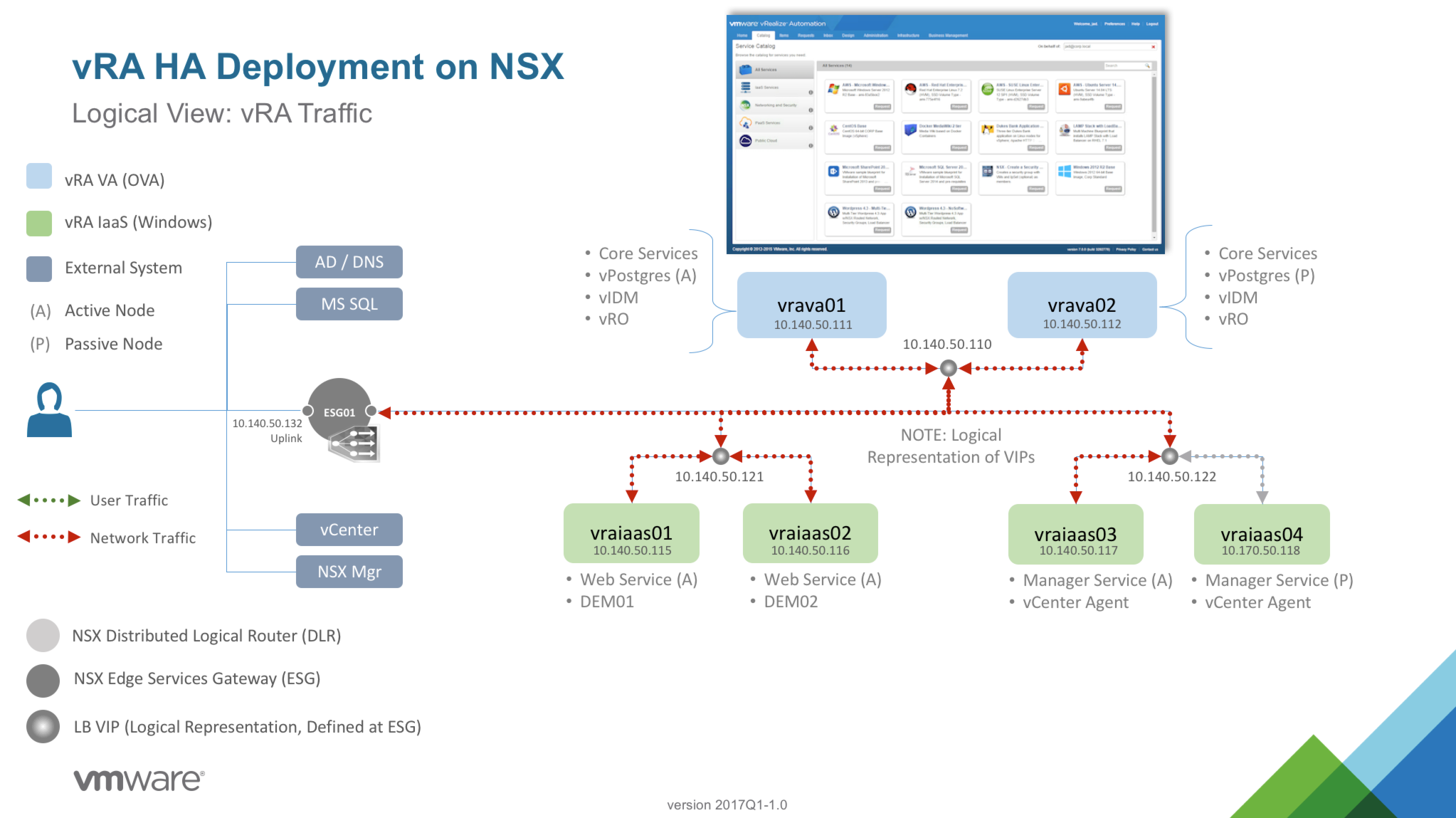

vRA System Traffic |

|

|---|---|

|

vRA Deployment Check List

There are a handful of [expected] external dependencies needed ahead of the deployment – Active Directory, rock-solid DNS, and MS SQL are prerequisites. And obviously we’ll need a vCenter server to deploy the nodes to and, eventually, use as a resource Endpoint for machine provisioning. And finally the NSX manager should be deployed and configured per best practices.

Document Review

Infrastructure

DNS

External Dependencies

|

Service Accounts

SSL Certs

Misc

|

Next Step: 02 – Deploy and Configure NSX

virtualjad

![[virtualjad.com]](https://www.virtualjad.com/wp-content/uploads/2018/11/vj_logo_med_v3.png)